Multiplexed bi-layered realization of fault-tolerant quantum computation

over optically networked trapped-ion modules

- Nitish K. Chandra

- Saikat Guha

- Kaushik P. Seshadreesan

We study an architecture for fault-tolerant measurement-based quantum

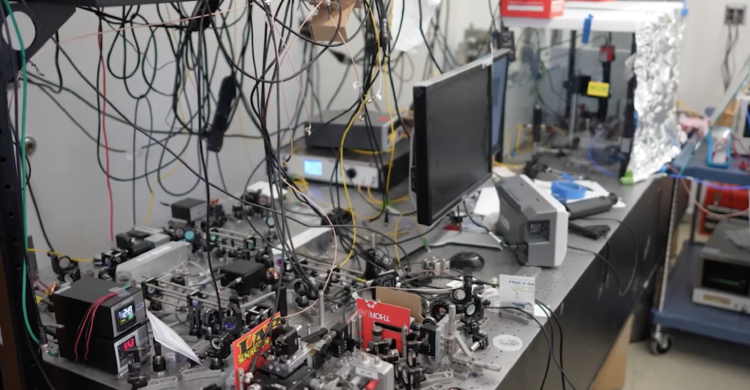

computation (FT-MBQC) over optically-networked trapped-ion modules. The

architecture is implemented with a finite number of modules and ions per

module, and leverages photonic interactions for generating remote entanglement

between modules and local Coulomb interactions for intra-modular entangling

gates. We focus on generating the topologically protected

Raussendorf-Harrington-Goyal (RHG) lattice cluster state, which is known to be

robust against lattice bond failures and qubit noise, with the modules acting

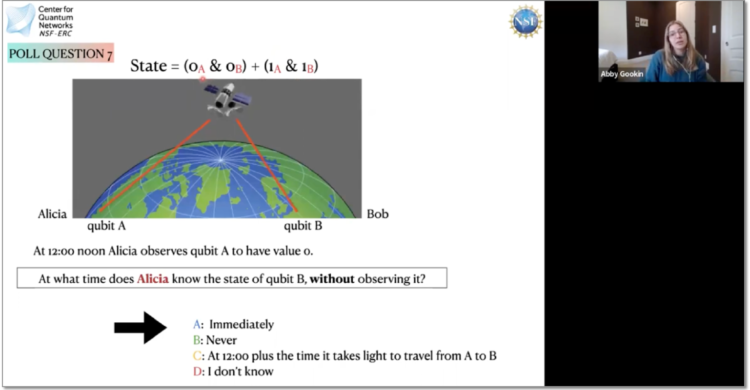

as lattice sites. To ensure that the remote entanglement generation rates

surpass the bond-failure tolerance threshold of the RHG lattice, we employ

spatial and temporal multiplexing. For realistic system timing parameters, we

estimate the code cycle time of the RHG lattice and the ion resources required

in a bi-layered implementation, where the number of modules matches the number

of sites in two lattice layers, and qubits are reinitialized after measurement.

For large distances between modules, we incorporate quantum repeaters between

sites and analyze the benefits in terms of cumulative resource requirements.

Finally, we derive and analyze a qubit noise-tolerance threshold inequality for

the RHG lattice generation in the proposed architecture that accounts for noise

from various sources. This includes the depolarizing noise arising from the

photonically-mediated remote entanglement generation between modules due to

finite optical detection efficiency, limited visibility, and the presence of

dark clicks, in addition to the noise from imperfect gates and measurements,

and memory decoherence with time. Our work thus underscores the hardware and

channel threshold requirements to realize distributed FT-MBQC in a leading

qubit platform today — trapped ions.